With a hint of Tesla and SpaceX genes, the engineering capabilities are very strong.

Musk's latest flagship large model from xAI, Grok3, has finally appeared! At noon, everyone began waiting in the live stream announcement from Musk.

After waiting for 20 minutes and online views reaching 1 million, the live broadcast finally started, and Musk was also present. The theme of the live stream was 'Our mission is to understand the entire universe.'

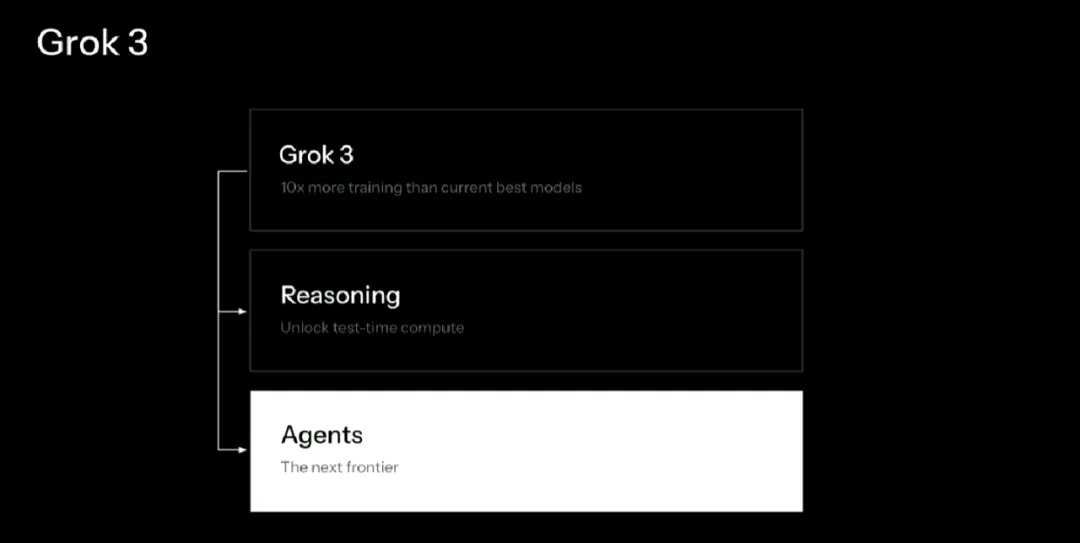

According to the engineers' introduction, to be precise, Grok 3 is a series, not just a single model. The lightweight version Grok 3 mini can answer questions faster but sacrifices some accuracy. Currently, not all models have been launched, but they will be gradually released starting today.

According to the engineers' introduction, to be precise, Grok 3 is a series, not just a single model. The lightweight version Grok 3 mini can answer questions faster but sacrifices some accuracy. Currently, not all models have been launched, but they will be gradually released starting today.

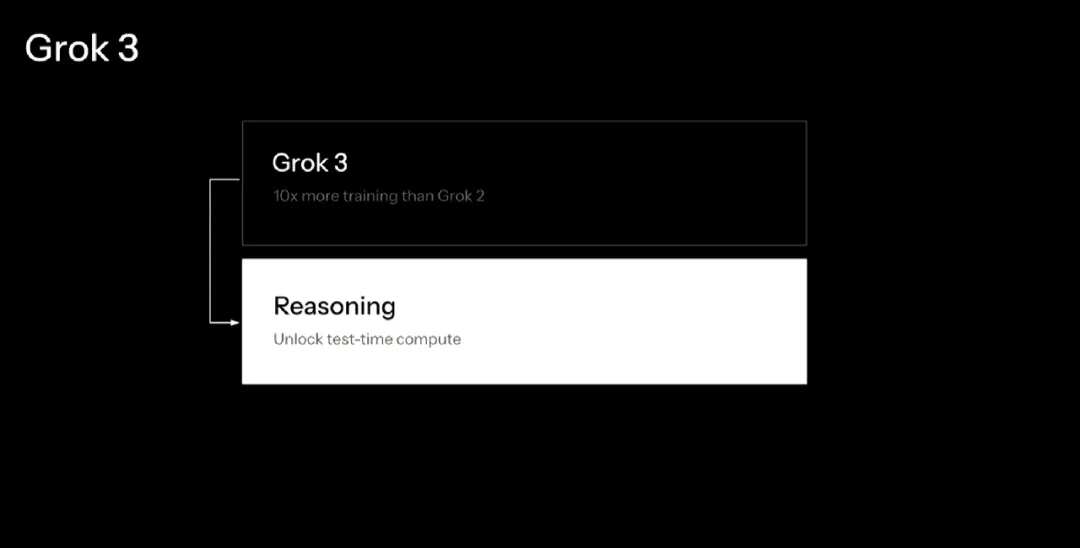

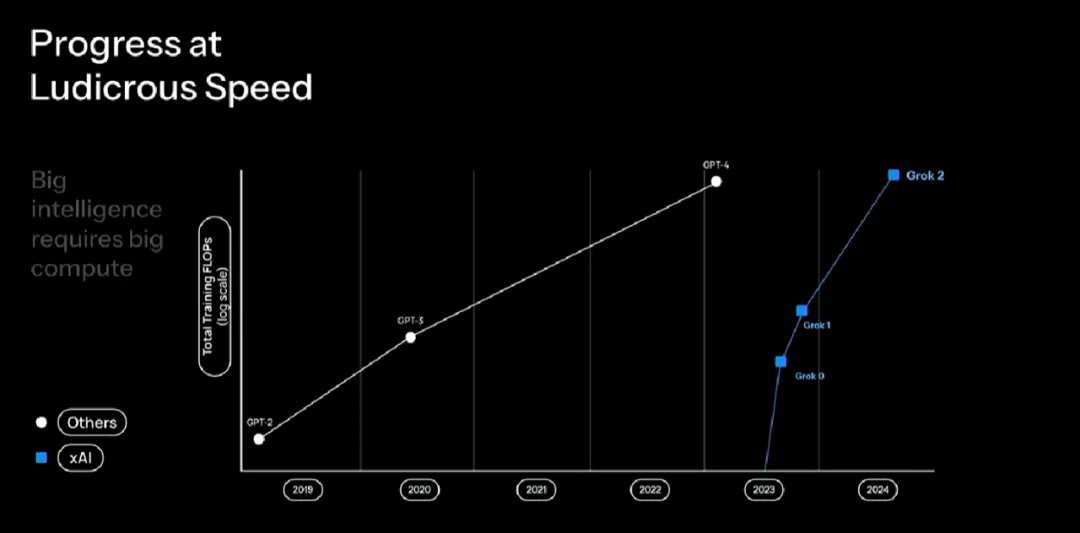

Musk directly stated that Grok 3 is '10 times better' than Grok 2 and has an expanded training dataset.

In addition, the voice mode originally scheduled to be released has been postponed, but it will not take too long, about a week.

However, the current large models will always be scrutinized closely when the spotlight is on them. xAI has been using a huge Datacenter located in Memphis—a Datacenter containing approximately 0.2 million GPUs—to train Grok 3.

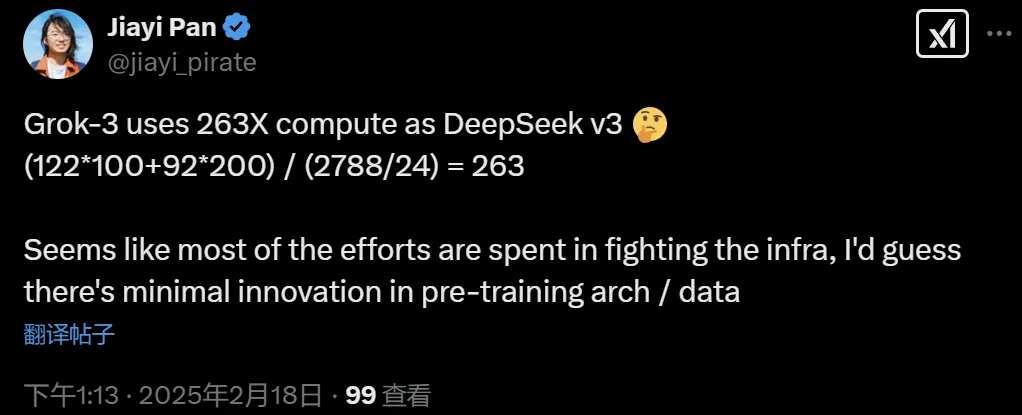

After the release of Grok 3, some pointed out that it consumes 263 times the computing power of DeepSeek V3. Uncertain whether this calculation is accurate.

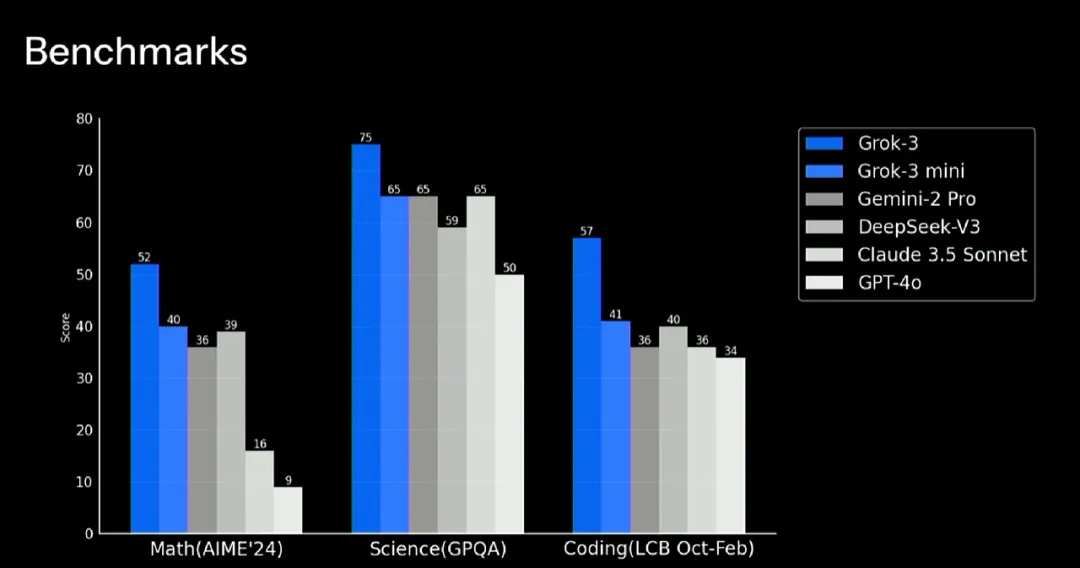

It seems Grok 3 focuses on powerful performance; let's take a look at the benchmark test results.

In Math (AIME 24), Science (GPQA), and Coding (LCB Oct-Feb), Grok-3 significantly outperformed Gemini-2 Pro, DeepSeek-V3, Claude 3.5 Sonnet, and GPT-4o. The performance of these models used for comparison is similar to Grok-3 mini.

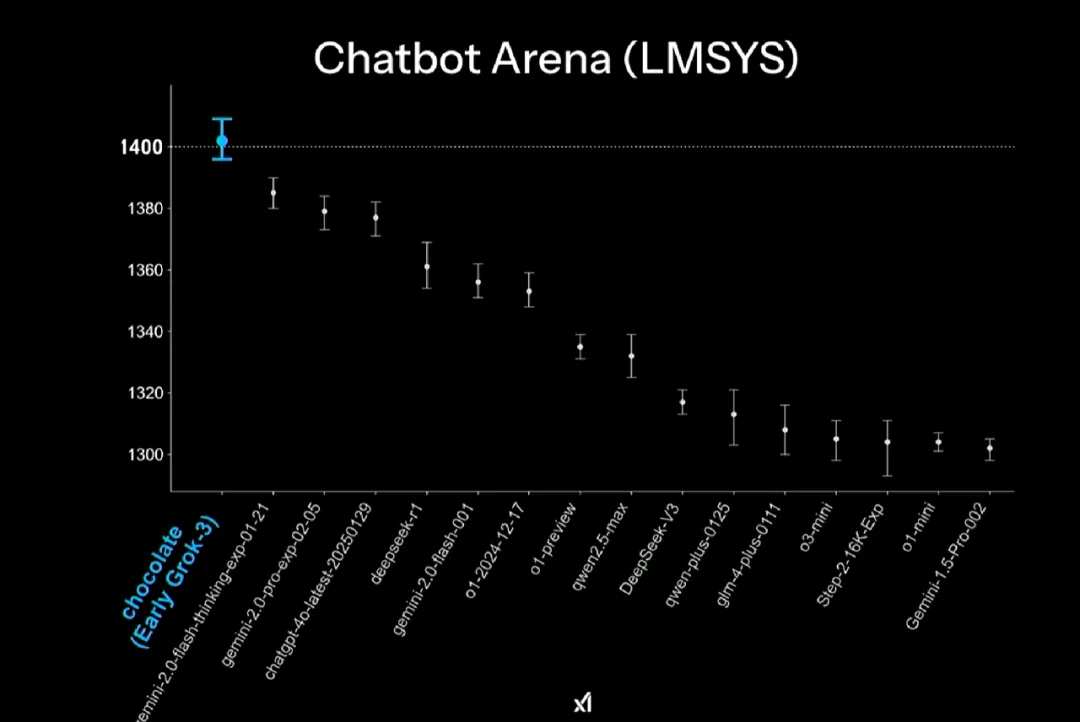

In the large model arena Chatbot Arena (LMSYS), the early version of Grok-3 scored first, achieving 1402 points, surpassing all other models, including DeepSeek-R1. Grok-3 also became the first model in history to break the 1400-point barrier.

The following diagram shows the rankings of Grok-3 and other models in programming, mathematics, creative writing, instruction following, long queries, and multi-turn dialogues. It can be seen that Grok-3 ranks first in every dimension.

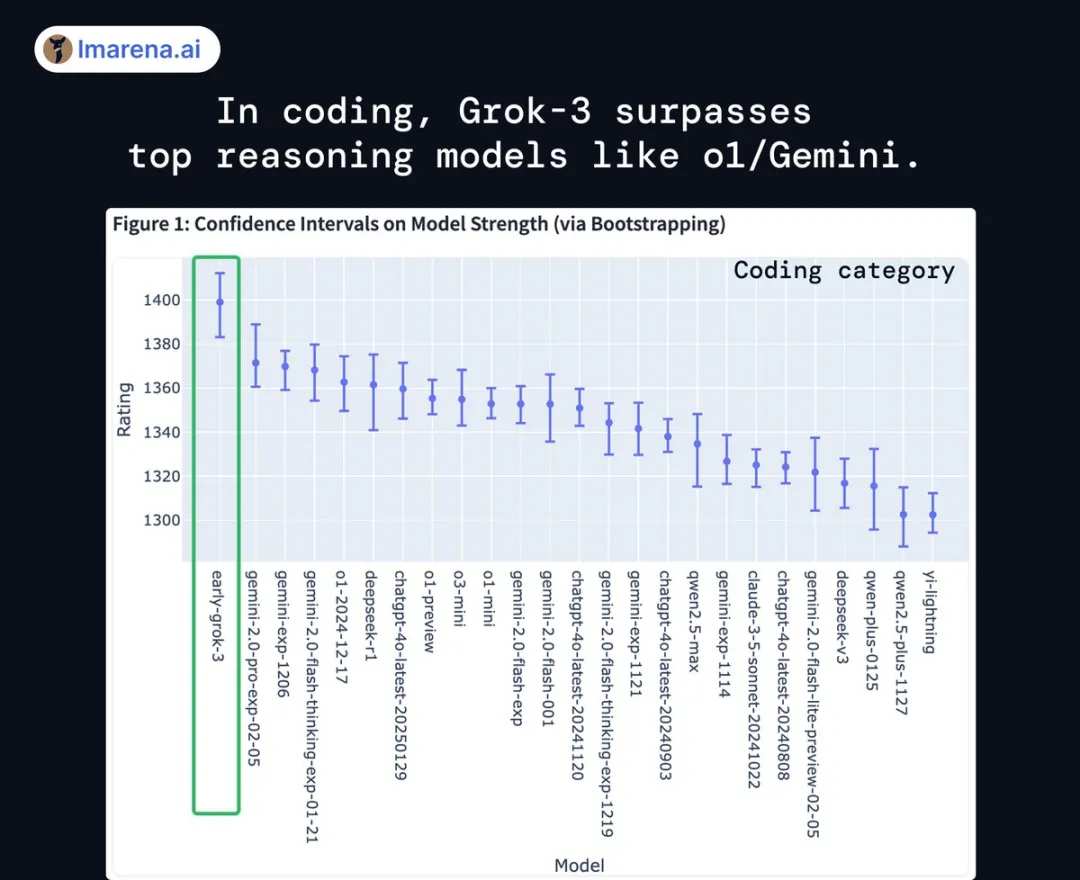

For example, in coding tasks, Grok-3 surpassed major reasoning models like o1, DeepSeek-R1, and Gemini-thinking.

Shortly after Grok-3 was released, AI expert Andrej Karpathy shared his 'early bird' experience. His initial impressions were summarized as follows:

The level of Grok-3 + Thinking is close to the state-of-the-art of OpenAI's strongest model (o1-pro at $200 a month), slightly better than DeepSeek-R1 and Gemini 2.0 Flash Thinking.

Grok-3 will attempt to solve the Riemann Hypothesis, similar to DeepSeek-R1, unlike many other models (o1-pro, Claude, Gemini 2.0 Flash Thinking) that immediately give up and simply state that this is an important unresolved problem.

DeepSearch is about on par with the Perplexity DeepResearch product level but has not yet reached the level of OpenAI's recently released "Deep Research," which feels more thorough and reliable.

Its reasoning ability is unmatched, surpassing all competitors such as o3 mini and R1.

Meanwhile, Grok-3 supports reasoning capabilities and unlocks test-time compute capabilities. This means that the highly competitive reasoning model market has welcomed a strong new competitor.

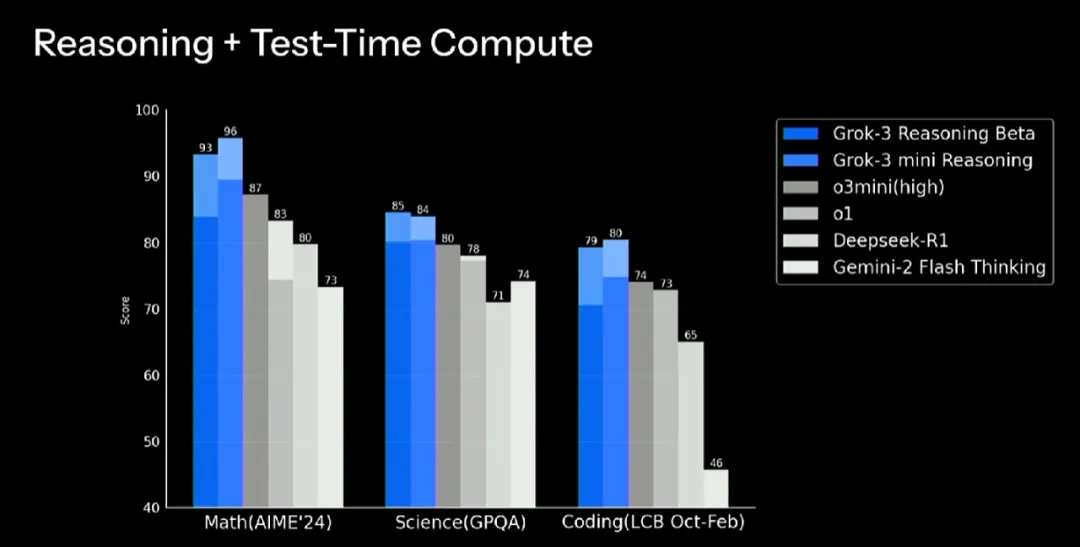

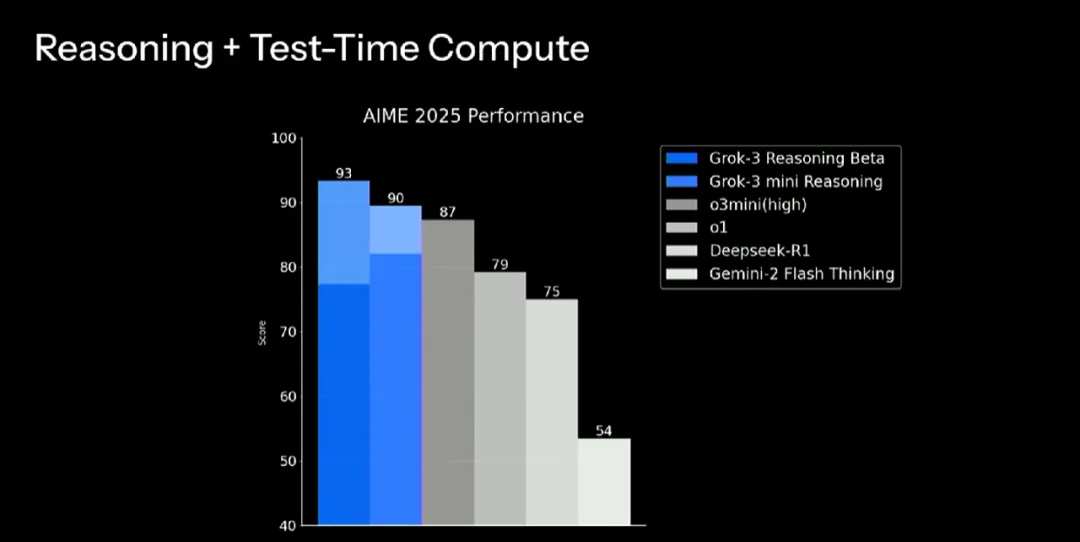

The reasoning benchmark results of Grok-3 also indicate this, which is divided into two versions: Grok-3 Reasoning. $BETA (0263.MY)$ and Grok-3 mini Reasoning.

When using more test-time compute (extended part in the figure), Grok-3's performance in "reasoning + test-time compute" on datasets in mathematics (AIME'24), science (GPQA), and coding (LCB Oct-Feb) has surpassed that of OpenAI o3 mini (high), o1, DeepSeek R1, and other reasoning models like Google's Gemini 2 Flash Thinking.

In the AIME 2025 Mathematics Competition, Grok-3 Reasoning Beta and Grok-3 mini Reasoning both dominated the top two positions, significantly surpassing other reasoning models.

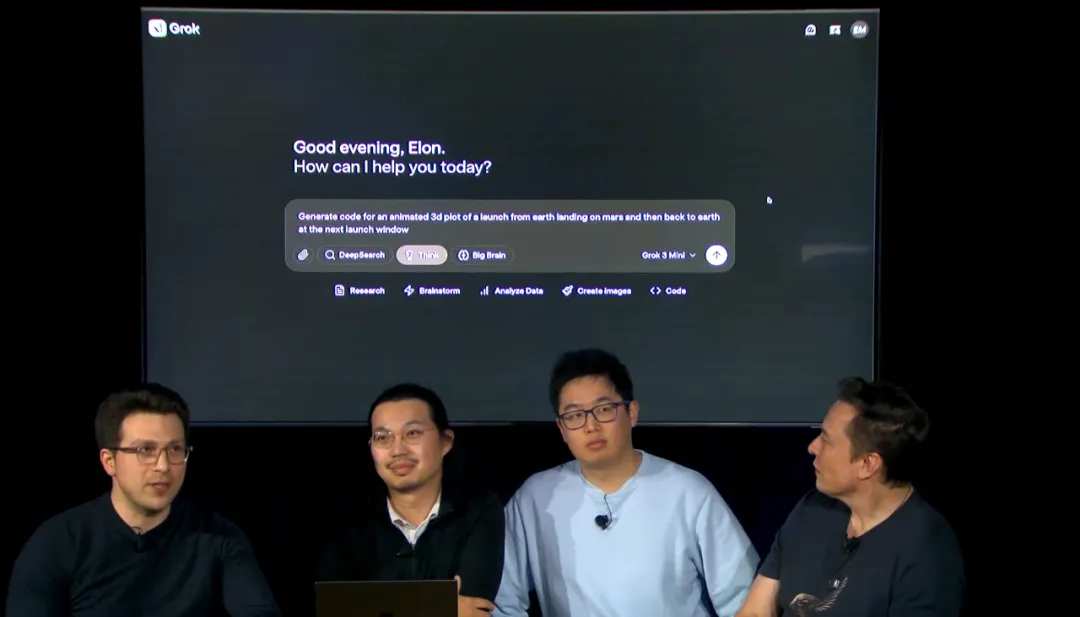

The user interface of Grok-3 is shown below, where we can see its Think mode.

In practical use, like other reasoning models, Grok-3 can showcase the complete thinking process and the duration of thinking.

Moreover, Grok-3 also supports the 'Big Brain' mode, using more computing power to solve problems and engage in deeper thinking.

Grok-3 can do things beyond your imagination, such as 'generating code for a 3D animated graphic of a launch from Earth, landing on Mars, and returning to Earth during the next launch window.'

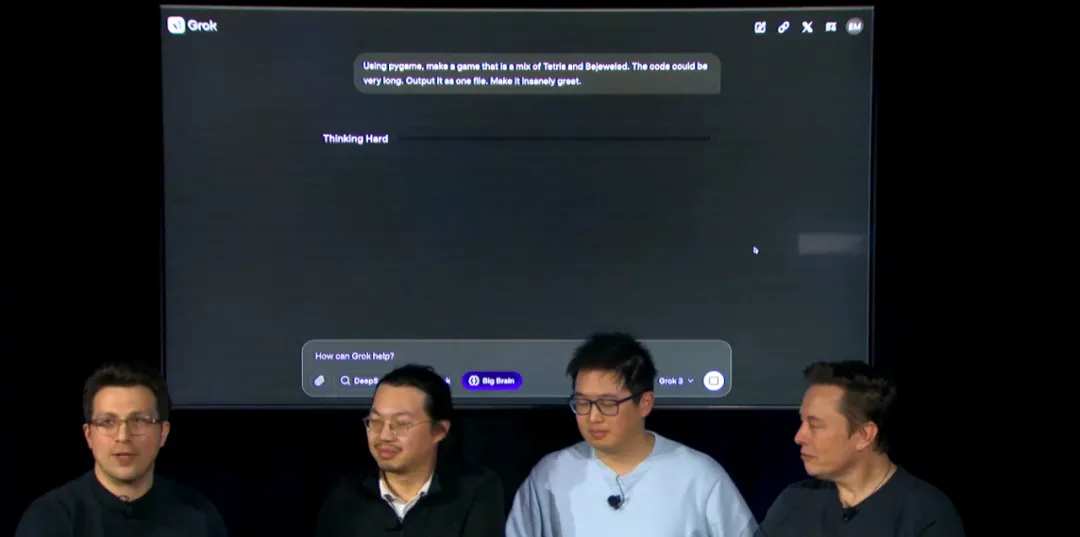

For example, 'creating a game that combines Tetris and Bejeweled using pygame, the code can be long, and the effect should be cool':

From the demonstration, it appears that Grok-3's capabilities are all online.

The next generation of the intelligent agent - DeepSearch has emerged.

Grok-3 also possesses powerful agent capabilities, conducting in-depth research, brainstorming, data analysis, image generation, code writing, and debugging through DeepSearch.

It can be said that DeepSearch is comparable to the deep search Deep Research launched earlier by OpenAI, which can complete complex research tasks in minutes that would typically take human experts hours with internet connectivity.

Let’s look at a few examples where Grok-3 can perform deeper searches in DeepSearch mode while also invoking its thinking abilities. Moreover, the steps required for the search itself are also demonstrated.

In the following example, Grok-3 is asked to make a complete prediction for the March Madness tournament (create a full march madness bracket prediction).

Finally, here is the relevant information about subscriptions and pricing:

X Premium+ subscribers will be the first to receive Grok 3, while other features require a subscription to the version that xAI calls SuperGrok.

The price for SuperGrok is $30 per month or $300 per year, unlocking more reasoning and DeepSearch queries, and providing unlimited image generation.

After the release, the team also conducted a simple Q&A based on questions from online users.

It was mentioned that xAI will release a Grok-driven voice application (expected to be launched in about a week). Moreover, when users engage in voice conversations with it, the model will retain some memory of the dialogues with the user.

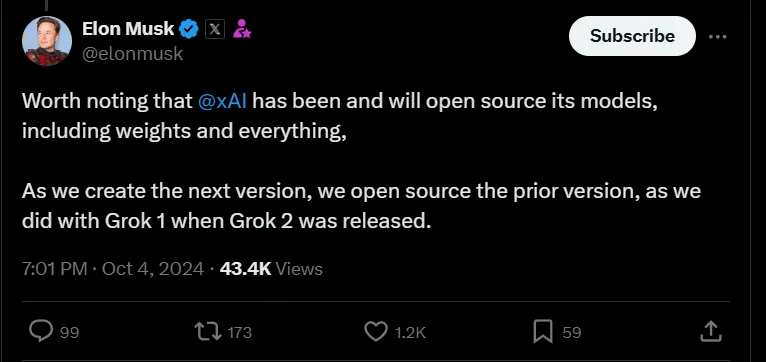

In addition, Musk reiterated xAI's open-source principle, stating that the previous version of the model would be open-sourced after the release of the latest model. He indicated that Grok 2 would be open-sourced after the release of Grok 3 stable version (which may take a few months). This point seems less impressive than the open-source approach of DeepSeek.

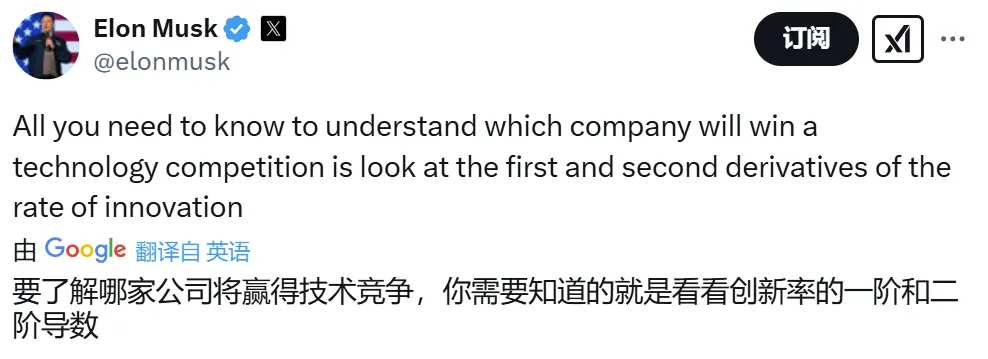

Ultimately, the event concluded with a demonstration video of an xAI voice mode. Afterward, Musk tweeted to hint that his company would win the tech competition against OpenAI, as xAI's rate of innovation is characterized by higher first and second derivatives.

What do you think about Musk's announcement today?

Editor/danial

根据工程师们介绍,准确地说,Grok 3 是一个系列,不只是某一个模型。Grok 3 的轻量版本 Grok 3 mini 可以更快地回答问题,但会牺牲一些准确性。目前并非所有型号都已上线,但会从今天开始陆续推出。

根据工程师们介绍,准确地说,Grok 3 是一个系列,不只是某一个模型。Grok 3 的轻量版本 Grok 3 mini 可以更快地回答问题,但会牺牲一些准确性。目前并非所有型号都已上线,但会从今天开始陆续推出。